Securing Hadoop Big Data Landscape with Apache Knox Gateway and Keycloak: Part 3(Reference Architecture for Securing Hadoop Landscape)

The sample problem

In a typical enterprise environment we will have a Hadoop distribution, that will require Kerberos Authentication. The problem with Kerberos is its complicated and will require special clients if we want to access them.

Also you might have a heterogeneous application architecture, that interfaces with variety of authentication mechanism(like OAuth, SAML etc.), over users stored in Databases or active directory as well as Social accounts.

The solution architecture

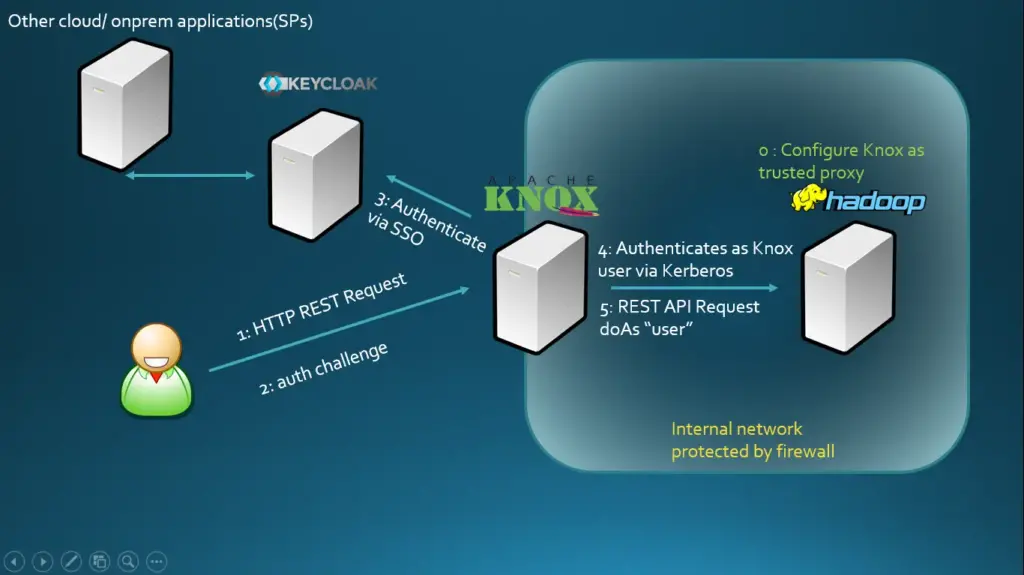

Here, we will setup a Keycloak server that can be configured to authenticate against an AD server. Apache Knox can be configured to delegate authentication via SAML to Keycloak.

You can reuse the Keylock server to protect your micro services supporting a range of authentication providers it supports, and hey if you already have and existing IDP you can always configure Keycloak to delegate the authentication to it without breaking anything.

Apache Knox should be configured as a Trusted proxy in Hadoop so it can perform operation on behalf of authenticated users using the ‘doAs’ operation in Hadoop and all the application and users should be proxied via knox.

The Hadoop infrastructure can be secured using firewalls and none of the endpoints need to be exposed publicly.

This site uses Akismet to reduce spam. Learn how your comment data is processed.

Leave a Reply